Invisible Workers

While the world marvels at ChatGPT’s eloquence and DALL-E’s creativity, a silent army of millions toils in the shadows. They are not robots, but humans—data labelers, content moderators, and infrastructure engineers—feeding the very machines that promise to replace them. This is the untold story of the invisible workforce powering the AI revolution.

The Ghost in the Machine

Artificial intelligence did not enter the workplace with the launch of ChatGPT. For over a decade, AI-driven algorithms have been the invisible engine behind search engines, logistics optimization, fraud detection, and personalized advertising [^14^]. What changed in late 2022 was merely the interface—we finally gained the ability to talk to the ghost. But the ghost itself has been haunting our infrastructure for years.

According to the International Labour Organization (ILO), an “invisible” workforce stands behind every chatbot response, social media algorithm, and automated system we now take for granted [^4^]. These workers fall into two primary categories: content moderators who review harmful material to keep platforms safe, and data labelers/annotators who structure reality so machines can learn. Wherever unions speak to these workers, they describe identical conditions: extreme pressure, constant monitoring, low wages, and severe mental health harms.

The Human Cost of “Clean” Data

Sociologist Antonio Casilli has spent a decade documenting what he calls the “invisible and precarious workers” behind every revolutionary AI product. In 2022, he spent a week in Madagascar at a company where hundreds of people annotated and prepared AI data for consulting firm Capgemini—for just €90 a month [^3^]. When confronted with evidence of this exploitation, senior managers responded with “deafening silence.”

🔴 The Scale AI Paradox

Scale AI, a leading data annotation firm, became a service provider for most major AI companies including Google, OpenAI, and Meta. It paid its global contractors a pittance while raking in billions. In June 2025, Meta acquired a 49% stake in Scale AI for approximately $14.3 billion, making founder Alexandr Wang Meta’s Chief AI Officer [^17^] [^15^]. Since the acquisition, scrutiny of worker conditions has vanished into corporate silence. As Casilli notes: “Acquisition, then silence.”

Trauma as a Business Model

The psychological toll on content moderators is staggering. A 2025 survey of 76 workers from Colombia, Ghana, and Kenya documented 60 independent incidents of psychological harm, including PTSD, depression, anxiety, and substance dependence [^23^]. One former moderator reported reading up to 700 sexually explicit and violent pieces of text per day, with the psychological toll causing him to lose his family.

A recent University of Minnesota study found that 52% of African content moderators met clinical thresholds for probable depression, with distress scores roughly double those of moderators in other regions [^21^]. Workers are often bound by strict NDAs, legally prevented from speaking about what they see or how it affects them—enforced silence that compounds the trauma.

“Beheadings, child abuse, people making love—how many times can you watch these things before you stop feeling anything at all? We lose our ability to be human. We lose our emotions. We can’t be happy. We can’t be sad. We become robots.” — Content Moderator for TikTok, Turkey [^25^]

The Automation Cascade

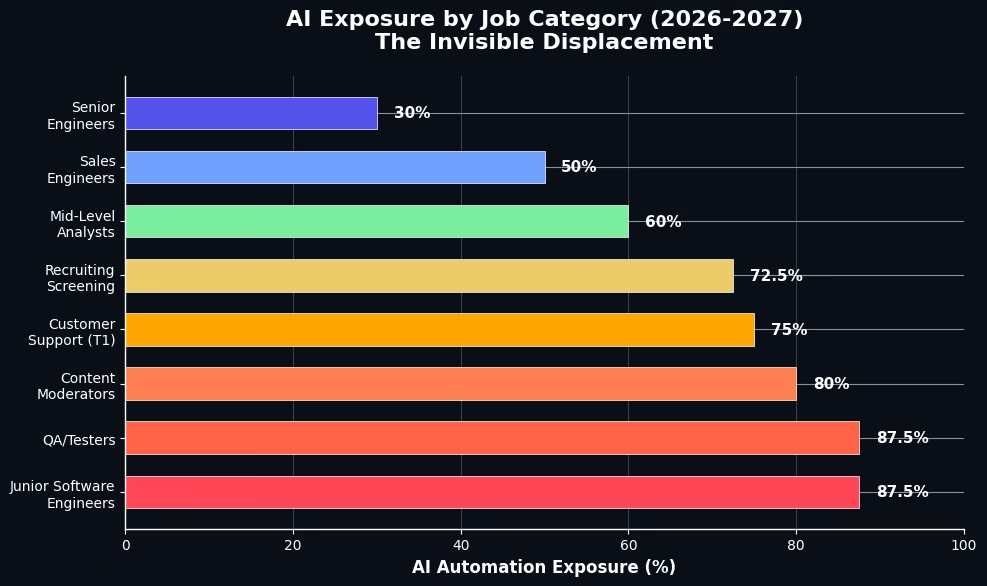

The displacement is no longer theoretical. In March 2026, Meta announced workforce reductions affecting approximately 16,000 jobs—roughly 20% of its staff—explicitly to fund a $600 billion AI infrastructure plan through 2028 [^1^]. What distinguishes this from prior tech layoffs is the mechanism: Meta is cutting headcount through “measured productivity displacement identified via internal AI monitoring systems.” The company can now quantify exactly how many humans each AI tool replaces.

The IMF found that approximately 93% of U.S. jobs can be partially performed by AI, with companies positioned to transfer over $4.5 trillion in labor expenses to AI solutions [^1^]. The “Canaries Paper” from Stanford Digital Economy Lab revealed that workers ages 22 to 25—the entry-level “canaries”—have seen about a 13% decline in employment since late 2022 [^11^].

The Infrastructure You Never See

Beyond the human labor, AI now autonomously manages the digital infrastructure itself. In 2026, AI-driven systems handle predictive scaling, self-healing networks, and automated remediation without human intervention [^16^]. An estimated 78% of AI failures are invisible—the system gets something wrong, but no one catches it because the output looks plausible enough [^19^].

Modern AI infrastructure operates as a “continuous feedback loop”: data ingestion, transformation, model training, deployment, and inference—all interlocked and self-optimizing [^2^]. We have built a world where AI manages the infrastructure that runs AI, creating layers of automation so deep that even the engineers who built them cannot fully trace every decision.

The Asymmetric Future

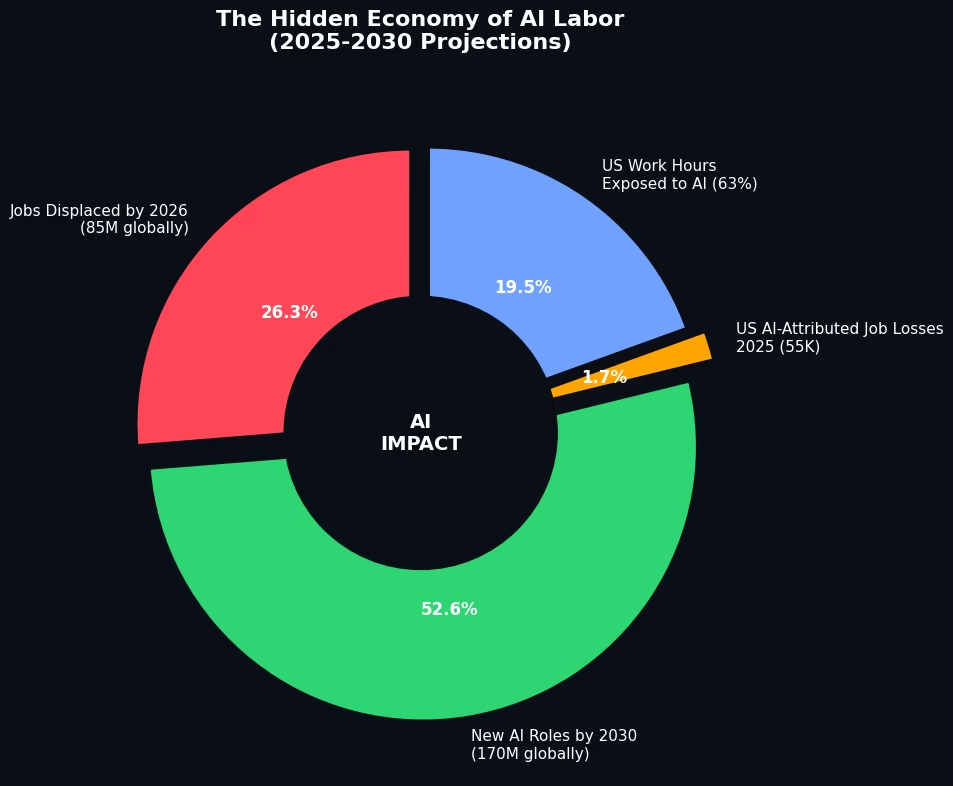

The economic asymmetry is stark: capital captures AI gains within 2-3 quarters, while labor absorbs losses on a 2-4 quarter lag [^1^]. This creates a dangerous window where GDP growth masks labor market deterioration. For every tech job lost, 3-5 service sector jobs face cascading risk.

Yet, there is a counter-narrative. AI is also projected to create 170 million new roles globally by 2030, with AI-related job postings already up 134% above 2020 levels [^8^]. The question is not whether AI will transform work—it already is. The central issue, as the ILO emphasizes, is ensuring this transformation advances decent work and social justice [^4^].

Conclusion: Making the Invisible Visible

AI is not a disembodied intelligence floating in the cloud. It is a vast industrial apparatus built on human labor, physical infrastructure, and economic extraction. The “invisible workers” are not a bug in the system—they are its foundational feature. From the data centers humming in the desert to the moderators screening trauma in Nairobi, Manila, and Istanbul, the AI revolution has a human backbone.

As we stand in 2026, facing Meta’s $600 billion AI arms race and the silent disappearance of entry-level jobs, we must ask: Are we building a future where technology serves humanity, or one where humanity serves the technology? The answer lies not in the algorithms, but in our willingness to see the workers behind them.

References & External Sources

- [^1^] DWU Consulting — “The AI Revolution: Impact on the U.S. Economy (2026–2027)” (March 2026)

- [^2^] Mirantis — “Building AI Infrastructure: A Practical Guide” (April 2025)

- [^3^] Philonomist — “AI’s Invisible Workers: Interview with Antonio Casilli” (February 2026)

- [^4^] UN News — “How AI is already reshaping working conditions” (March 2026)

- [^5^] Introl Blog — “Infrastructure Automation with AI” (January 2026)

- [^6^] StackRoute Learning — “How AI is Automating Cloud Infrastructure” (March 2025)

- [^7^] Best Virtual Specialist — “Real-World Examples of AI in IT Infrastructure” (September 2025)

- [^8^] SQ Magazine — “AI Job Loss Statistics 2026” (February 2026)

- [^9^] Red Hat — “3 Automation Use Cases for AI in IT Operations” (May 2025)

- [^10^] European Journal of Computer Science — “Real-World Examples of AI-Powered Automation” (May 2025)

- [^11^] The Atlantic — “America Isn’t Ready for What AI Will Do to Jobs” (February 2026)

- [^12^] Scale Computing — “How Agentic AI Is Transforming Infrastructure Automation” (August 2025)

- [^13^] Yale Budget Lab — “Tracking the Impact of AI on the Labor Market” (April 2026)

- [^14^] Zylo — “The Rise of AI in the Workplace: New Stats + Pros & Cons” (February 2026)

- [^15^] Yahoo Finance — “Scale AI reduces workforce following Meta investment” (July 2025)

- [^16^] AI Labs — “How AI is redefining cloud infrastructure in 2026” (January 2026)

- [^17^] TIME — “Meta’s $15 Billion Scale Deal Could Leave Gig Workers Behind” (June 2025)

- [^18^] TIME — “Exclusive: A Push for Safety Rules to Protect AI Workers” (June 2025)

- [^19^] Bessemer Venture Partners — “AI Infrastructure Roadmap: Five frontiers for 2026” (March 2026)

- [^20^] GIZ/Aapti Institute — “Invisible Workers, Visible Harms” (2026)

- [^21^] TIME — “African Content Moderators Have Worse Mental Health than Global Peers” (March 2026)

- [^22^] Reddit r/automation — Community discussion on 2026 AI automation tasks

- [^23^] Brookings Institution — “Reimagining the future of data and AI labor in the Global South” (October 2025)

- [^24^] Leon Furze — “Teaching AI Ethics 2026: Human Labour” (January 2026)

- [^25^] UNI Global Union — “Global content moderators alliance demands Mental Health Protocols” (June 2025)

- [^26^] TSG Invest — “Scale AI Stock: $29B Valuation” (April 2026)

- [^27^] Stellium Consulting — “Guide to AI Automation Solutions 2026” (February 2026)

- [^28^] SOMO — “Big Tech sets unfair terms for AI data workers globally” (March 2026)

- [^29^] Oracle Cloud — “Behind the Scenes: GPU Power Smoothing for AI Training” (March 2026)